My experience building GPT's using OpenAI's GPT Builder

In the ever-evolving landscape of artificial intelligence, the ability to rapidly create and deploy specialized applications without coding is becoming a reality thanks to platforms like OpenAI's GPT Builder. As someone who briefly explored this technology, I wanted to share my experience developing miniature yet powerful apps ('GPTs') and successfully publishing them without writing any code.

Bringing Abstract Ideas to Life

First of all - what are these 'GPTs' exactly?

GPT's are in essence 'scoped AI' mini apps - you are still using OpenAI's larger general LLM's but focusing them on behaviors that fulfill narrower applications/requirements. Consequently, GPT's can vary widely in their functions. From creating content, assisting with language translation, to even generating code, the possibilities are pretty big. They learn from interactions and can be tailored to specific needs.

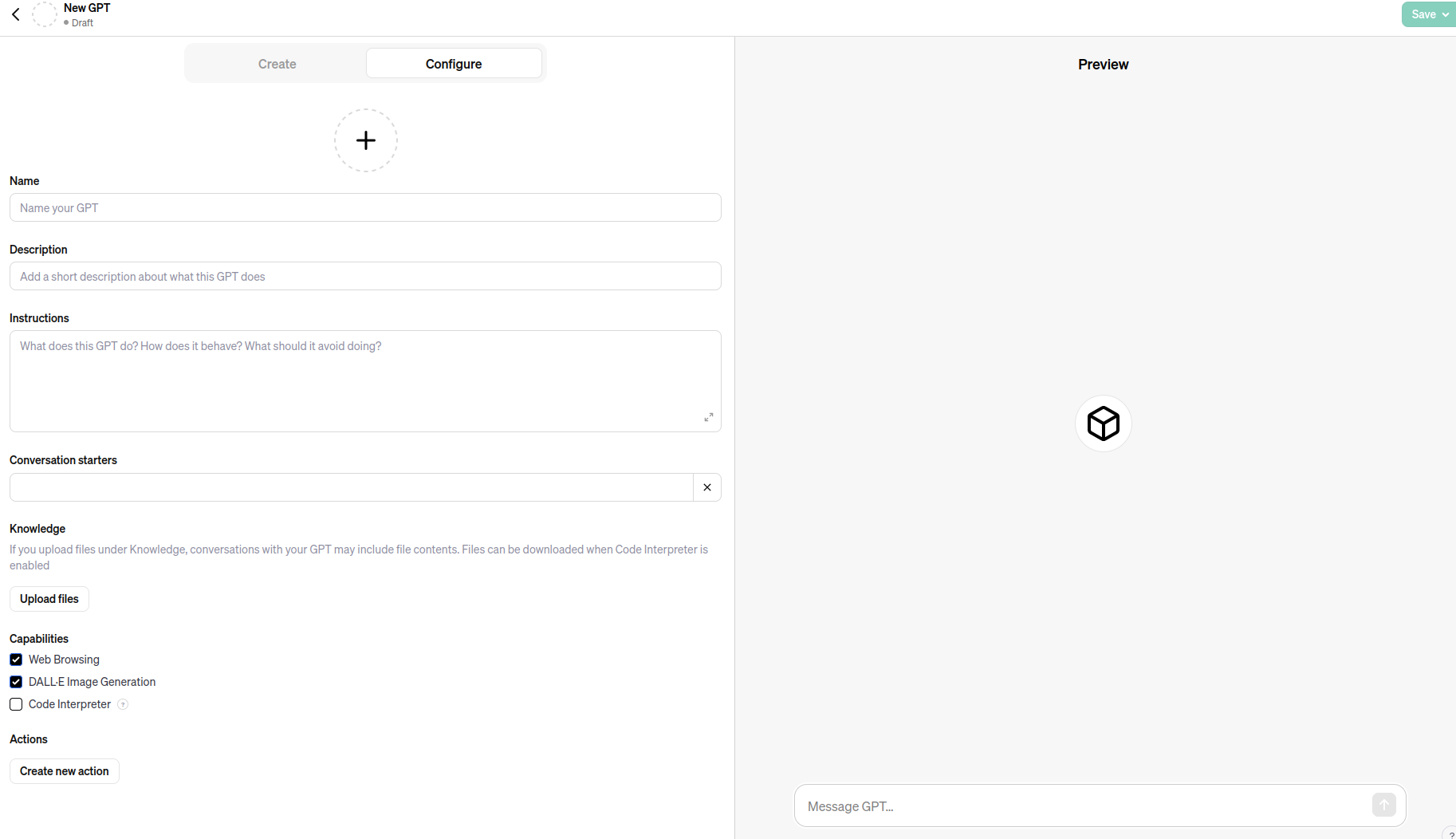

The 'GPT Builder' helps you scope these applications as unique entities. It is all done within the OpenAI ecosystem, meaning you can only build these using the OpenAI account and interfaces, and you can only distribute these within the same ecosystem to other OpenAI users.

Essentially you 'scope' a mini app with the assistance of a specialised ChatGPT interface (there is a brief OpenAI how to here), and then once completed you distribute to other users using the OpenAI GPT store.

So, where to get started? John Chodacki, Paul Shannon and I wrote an article at the beginning of 2023 about the utility of AI in scholarly communications. Essentially we outlined a taxonomy for operations in scholarly communications, our first pass was the following:

- Extract: Identify and isolate specific entities or data points within the content.

- Validate: Verify the accuracy and reliability of the information.

- Generate: Produce new content or ideas, such as text or images.

- Analyse: Examine patterns, relationships, or trends within the information.

- Reformat: Modify and adjust information to fit specific formats or presentation styles.

- Discover: Search for and locate relevant information or connections.

- Translate: Convert information from one language or form to another.

So I focused first of all in thinking about this list and how I could try making some apps that provided something useful for scholarly comms that wasn't the 'AI author' which most discussions in this domain seem to gravitate towards.

Immediately I thought of how to extract metadata from documents and the generation of accessible image descriptions. I also thought it would be interesting to test out reviewer recommendations and see how it goes.

My journey began simply - with an idea for a new application. I described the concept in plain English and inputted it into the GPT Builder interface. Rather than a structured technical specification, I started with a rough, open-ended description, mirroring how I conceptualize early-stage projects.

The Builder, using the technological powerhouse of GPT behind a straightforward user interface, allowed me to interactively refine my app's capabilities through natural language instructions. This felt more like collaborating and iterating via conversation rather than programming - an iterative learning process for both myself and the AI.

Deploying Prototypes to Market

Once I had a demonstrable prototype, publishing to the GPT Store was seamless. My apps - including the Academic Reviewer Scout, Accessible Image Creator, and JATS Content Extractor - each serve unique functions (with links through to each):

- Academic Reviewer Scout: Streamlines finding suitable reviewers for academic papers.

- Accessible Image Creator: Generates accessible alt text descriptions for images to aid visually impaired users.

- JATS Content Extractor: Efficiently extracts entities from documents and outputs them in JSON or JATS format.

Just to say, these are all very much beta. If you do try them out and they don't work as expected or give odd results, please let me know! I'd love to learn more which will help me improve them. Some might not turn out to be very good at what they do, but only experimentation will tell.

Building in Explainability

In addition to performing specific functions, I decided each app should also serve an educational purpose - they are designed to teach users about the very processes they facilitate. While still in beta stage, the potential for the evolution of the function-education coupling is pretty clear to me.

Future Integration

Presently confined to the OpenAI interface, integrating API connections could vastly expand capabilities. One can envision the Image Creator fetching relevant graphics from the web, or the Academic Scout directly accessing journal databases (this is already possible if those databases are exposed via an API). The possibilities for increased functionality through external service integration are pretty interesting.

Concluding Perspectives

My experience with GPT Builder offered a glimpse into an AI-enabled future where app development is accessible, intuitive, and remarkably powerful. I'll keep pushing these forward and see if they gain any traction. I also will push forward the functionality of each and generate some new mini apps as I get ideas. I have come to understand a few things already - first, the API integrations (in and out) are hugely powerful. Second, the scope of the apps are confined by the OpenAI experience, which is what it is. Third, I think the idea of coupling functionality with the opportunity for people to learn more about best practices at the same time seems a pretty attractive idea.

Lastly, I actually tried building a metadata extraction app and a PDF parser via OpenAI GPT (and other venues) early in 2023. The problems I had is that the GPTs would do things like 'make up' the author list. It would format the JATS correctly but the author names were fictional (despite supplying a list of authors in the text). Now however this level of problem seems to have gone away - the extracted author names are actually those in the texts tested. GPT4 still does not seem able to parse entire PDF docs verbatim and convert it into another format, but at lease the bits I can now extract (eg abstract) are verbatim and not completely arbitrary made up passages. So in these areas (both of interest to me) there seem to have been forward movement.

The GPT store is launched this week...lets see if my apps get any use!

© Adam Hyde, 2024, CC-BY-SA

Image public domain, created by MidJourney from prompts by Adam.

Member discussion